If you’re running LLM calls at any real volume, you’ve already noticed how fast the token bills compound. LLM caching cost reduction isn’t a niche optimization — it’s one of the highest-leverage things you can do before scaling infrastructure or switching models. I’ve seen teams cut 40–50% off their monthly API spend by implementing two or three of the patterns covered here, without any visible change to end-user experience.

This article covers the approaches that actually work in production: Anthropic’s prompt caching for Claude, OpenAI’s equivalent, semantic response memoization, and context reuse patterns that apply across any model. I’ll include working code for each and be honest about where they fail.

Why Token Costs Compound So Aggressively

Most LLM cost overruns follow the same pattern: a large system prompt sent on every request, repeated context that doesn’t change between calls, or identical queries being re-executed multiple times. At $15 per million input tokens (GPT-4o) or $3 per million (Claude Haiku), sending a 4,000-token system prompt on every call costs roughly $0.006–$0.012 per request just for the context. At 10,000 daily calls, that’s $60–$120/day from the system prompt alone.

The fix isn’t always “use a cheaper model.” Sometimes it’s sending fewer tokens, reusing computations, or batching intelligently. Let’s go through each approach.

Anthropic Prompt Caching: The Highest-ROI Option Right Now

Anthropic’s prompt caching is the most impactful single change for Claude users. Once you mark a block of content as cacheable, Anthropic stores a KV representation of those tokens server-side. Subsequent requests that hit the cache pay only 10% of the normal input token price for those cached tokens.

Cache writes cost 25% more than normal input tokens, but cache reads cost 90% less. The math works in your favor after the second or third call on any session with a large static prefix.

How to Implement It

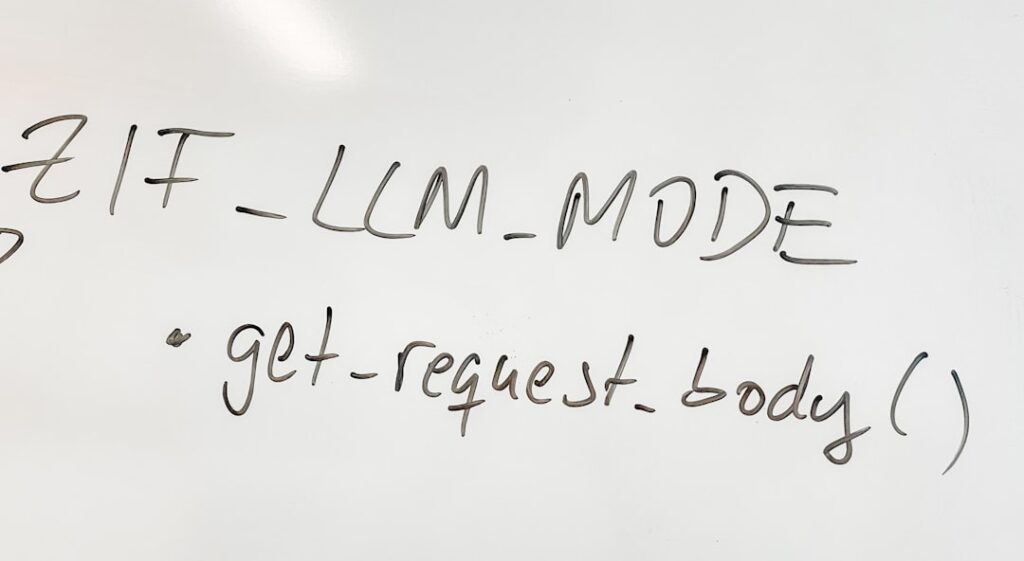

You add cache_control blocks to your message content. The cache key is implicitly defined by the content — identical content at the same position hits the same cache entry. Cache entries expire after 5 minutes of inactivity (as of writing — verify this in Anthropic’s docs as it’s been updated).

import anthropic

client = anthropic.Anthropic()

# Your large, static system context — documents, instructions, examples

SYSTEM_CONTEXT = """

[Imagine 3,000 tokens of product documentation, tool definitions, or few-shot examples here]

""".strip()

def run_with_prompt_cache(user_message: str) -> str:

response = client.messages.create(

model="claude-3-5-sonnet-20241022",

max_tokens=1024,

system=[

{

"type": "text",

"text": SYSTEM_CONTEXT,

"cache_control": {"type": "ephemeral"} # Mark for caching

}

],

messages=[

{"role": "user", "content": user_message}

]

)

# Check cache performance in usage stats

usage = response.usage

print(f"Input tokens: {usage.input_tokens}")

print(f"Cache write tokens: {getattr(usage, 'cache_creation_input_tokens', 0)}")

print(f"Cache read tokens: {getattr(usage, 'cache_read_input_tokens', 0)}")

return response.content[0].text

The first call writes to cache (you pay 125% of normal input cost for the cached block). Every subsequent call within the TTL window pays 10% of normal cost for those tokens. On a 3,000-token system prompt at Claude Sonnet pricing (~$3/M input tokens), that drops from ~$0.009 to ~$0.0009 per call for the static portion.

What Actually Breaks With Prompt Caching

Cache hits require exact prefix matching. If your system prompt changes at all — even whitespace — you write a new cache entry. This means you can’t dynamically inject per-user data into the cacheable block. Structure your prompts so static content (instructions, tools, examples) comes first, and dynamic content (user context, current date, session data) goes after the cached block in the human turn.

Also: the 5-minute TTL means prompt caching works best for high-frequency call patterns. If your users interact once every 30 minutes, you’ll mostly be paying cache write costs without getting the read benefit. In that case, look at response memoization instead.

OpenAI Automatic Prompt Caching

OpenAI introduced automatic prompt caching for GPT-4o and o1 models in late 2024. Unlike Anthropic’s opt-in approach, it’s automatic — no API changes required. Prompts longer than 1,024 tokens that share a common prefix get cached at the infrastructure level.

Cache hits cost 50% of the normal input token price (versus Anthropic’s 10%). Still valuable, but the math is less dramatic. You don’t pay extra for cache writes. The cache TTL is longer — reportedly up to 1 hour under load, but OpenAI doesn’t guarantee this.

The practical implication: with OpenAI, just make sure your static system prompt comes first and is consistent across calls. With Anthropic, you need explicit cache_control blocks. Both reward the same underlying pattern: stable content at the top of your context.

Response Memoization: Model-Agnostic Cost Reduction

Prompt caching helps with input tokens. Response memoization eliminates redundant API calls entirely by caching complete responses for identical (or near-identical) inputs. This works for any model and is often the first thing to implement for deterministic or low-variability use cases.

Exact Match Caching

For pipelines where the same query recurs — document classification, structured extraction from templates, FAQ responses — exact match caching is trivially easy and dramatically effective.

import hashlib

import json

import redis

from anthropic import Anthropic

client = Anthropic()

cache = redis.Redis(host="localhost", port=6379, decode_responses=True)

def get_cache_key(model: str, messages: list, system: str = "") -> str:

"""Stable hash of the full request parameters."""

payload = json.dumps({

"model": model,

"messages": messages,

"system": system

}, sort_keys=True)

return f"llm:exact:{hashlib.sha256(payload.encode()).hexdigest()}"

def cached_completion(

messages: list,

model: str = "claude-3-haiku-20240307",

system: str = "",

ttl_seconds: int = 3600

) -> str:

key = get_cache_key(model, messages, system)

# Check cache first

cached = cache.get(key)

if cached:

return json.loads(cached)

# Cache miss — call the API

response = client.messages.create(

model=model,

max_tokens=1024,

system=system,

messages=messages

)

result = response.content[0].text

# Store with TTL

cache.setex(key, ttl_seconds, json.dumps(result))

return result

This runs well in production with Redis. At ~$0.00025 per Haiku call for a short prompt, caching 5,000 repeat queries per day saves around $1.25/day — modest until you realize a single recurring template query in a busy SaaS product might hit 50,000 times daily.

Semantic Caching With Embeddings

Exact match caching misses semantically equivalent queries: “What’s the return policy?” and “How do I return an item?” should return the same cached response. Semantic caching uses embedding similarity to find near-matches.

import numpy as np

from openai import OpenAI

openai_client = OpenAI()

def get_embedding(text: str) -> list[float]:

response = openai_client.embeddings.create(

model="text-embedding-3-small", # ~$0.00002 per 1K tokens — cheap

input=text

)

return response.data[0].embedding

def cosine_similarity(a: list[float], b: list[float]) -> float:

a_arr, b_arr = np.array(a), np.array(b)

return float(np.dot(a_arr, b_arr) / (np.linalg.norm(a_arr) * np.linalg.norm(b_arr)))

class SemanticCache:

def __init__(self, threshold: float = 0.92):

self.threshold = threshold

# In production, use a vector DB: Pinecone, Qdrant, pgvector

self.entries: list[dict] = []

def lookup(self, query: str) -> str | None:

query_embedding = get_embedding(query)

for entry in self.entries:

similarity = cosine_similarity(query_embedding, entry["embedding"])

if similarity >= self.threshold:

return entry["response"]

return None

def store(self, query: str, response: str):

self.entries.append({

"embedding": get_embedding(query),

"response": response,

"query": query

})

The threshold is the key tunable parameter. At 0.92, you’ll get high-confidence semantic matches. At 0.85, you’ll start serving cached responses for queries that are related but not identical — which can cause subtle correctness bugs. I’d recommend starting at 0.92 and only lowering it with explicit testing on your actual query distribution.

For production semantic caching, use a vector database with ANN (approximate nearest neighbor) search. Qdrant and pgvector are both solid choices — don’t use in-memory lists at scale.

Context Reuse and Conversation State Management

Multi-turn agents and chatbots burn tokens by re-sending the full conversation history on every turn. A 20-turn conversation where each turn averages 500 tokens means turn 20 pays for 10,000 tokens of history before the new message even starts.

Sliding Window Context

The simplest fix: only send the N most recent turns plus the system prompt. For most conversational tasks, the last 6–10 turns contain everything the model needs.

def build_windowed_messages(

history: list[dict],

new_message: str,

window_size: int = 8

) -> list[dict]:

"""Keep only the most recent turns to control context length."""

recent = history[-window_size:] if len(history) > window_size else history

return recent + [{"role": "user", "content": new_message}]

Summarization Compression

When you need to preserve information beyond the window but can’t send full history, compress older turns into a rolling summary. This adds one cheap summarization call every N turns but keeps context tokens bounded.

def compress_history(history: list[dict], keep_recent: int = 4) -> list[dict]:

"""Summarize old turns, keep recent ones verbatim."""

if len(history) <= keep_recent + 2:

return history

old_turns = history[:-keep_recent]

recent_turns = history[-keep_recent:]

# Summarize old context with a cheap model

summary_prompt = "Summarize this conversation history concisely, preserving key facts and decisions:\n\n"

summary_prompt += "\n".join([f"{m['role']}: {m['content']}" for m in old_turns])

summary_response = client.messages.create(

model="claude-3-haiku-20240307", # Use the cheapest capable model

max_tokens=512,

messages=[{"role": "user", "content": summary_prompt}]

)

summary = summary_response.content[0].text

return [

{"role": "user", "content": f"[Previous conversation summary: {summary}]"},

{"role": "assistant", "content": "Understood."}

] + recent_turns

Combining Strategies: A Real-World Stack

In production, these aren’t mutually exclusive. Here’s the layered approach I’d use for a high-volume agent:

- Layer 1 — Exact response cache (Redis, TTL 1h): catches deterministic, repeated queries at zero API cost

- Layer 2 — Semantic cache (vector DB, threshold 0.92): catches near-identical queries

- Layer 3 — Prompt caching (Anthropic cache_control): reduces input token cost for cache misses that still need API calls

- Layer 4 — Context window management: sliding window + compression for multi-turn sessions

Each layer handles a different failure mode of the one above it. Together, they target the 30–50% cost reduction that’s achievable without degrading response quality.

What to Use Based on Your Situation

Solo founder or small team with unpredictable query patterns: Start with exact response caching using Redis and Anthropic prompt caching. These require minimal infrastructure and deliver the most immediate ROI. Skip semantic caching until you’ve validated the query distribution.

Production SaaS with recurring query patterns: Add semantic caching with pgvector (if you’re already on Postgres) or Qdrant. The embedding cost (~$0.00002/query) is negligible against the LLM calls you’re avoiding.

Agent or chatbot with multi-turn sessions: Sliding window context is non-negotiable. Combine it with prompt caching for your system prompt and tool definitions. Summarization compression is worth adding once average session length exceeds 15 turns.

High-volume, latency-sensitive pipelines: All four layers. Invest in a proper vector database, monitor cache hit rates by layer, and treat your cache key design as seriously as your prompt engineering. A 45% LLM caching cost reduction at scale is the difference between a margin-positive and margin-negative AI feature.

Editorial note: API pricing, model capabilities, and tool features change frequently — always verify current details on the vendor’s website before building in production. Code examples are tested at time of writing; pin your dependency versions to avoid breaking changes. Some links in this article may be affiliate links — we may earn a commission if you sign up, at no extra cost to you.