Most teams shipping LLM-powered agents have no idea whether their prompts are actually improving. They tweak a system prompt, eyeball a few outputs, and ship — then wonder why their product feels inconsistent. The teams that build durable, production-grade agents do something different: they treat LLM evaluation metrics like any other engineering measurement, establish baselines before making changes, and run reproducible benchmarks against the specific failure modes that actually matter for their use case.

This article covers how to build that system. You’ll walk away with a working evaluation harness, a sensible set of metrics for agent output quality, and a framework for A/B testing prompts and models that gives you statistically meaningful results — not gut feelings.

Why Most LLM Evaluation Approaches Fall Apart

The common failure mode is evaluating on vibes. Someone runs a new prompt through five test cases, the outputs “look better,” and the change ships. The problems:

- Five examples isn’t a sample size — you need enough coverage to catch tail failures and edge cases

- Human evaluation doesn’t scale — you can’t manually review 500 outputs every time you change a prompt

- “Looks better” isn’t a metric — you need to know better on what dimension, by how much, and at what cost

- No baseline means no measurement — you can’t know if you improved if you never measured the starting point

The second failure mode is benchmarking against the wrong thing. MMLU, HumanEval, and similar public benchmarks tell you about general model capability. They tell you almost nothing about whether GPT-4o is better than Claude Sonnet for your specific task — extracting structured data from support tickets, classifying customer intent, generating SQL from natural language in your schema. Build your own evals first.

The Four Metric Categories That Actually Matter

For production agents, I group LLM evaluation metrics into four buckets. Every agent needs coverage in all four — optimising only one will get you burned.

1. Output Quality Metrics

These measure whether the output is correct and useful. The right metrics depend on your task type:

- Exact match / F1 — for structured extraction, classification, and any task with a ground-truth answer. Hard to game, easy to compute.

- Semantic similarity — use sentence-transformers or the OpenAI embeddings API to measure cosine distance between expected and actual output. Useful when exact wording doesn’t matter but meaning does. Watch out: two semantically similar strings can have opposite facts.

- LLM-as-judge — use a model (typically GPT-4o or Claude Sonnet) to score outputs on defined rubric dimensions. Scales well, but introduces its own biases; models tend to prefer their own outputs and longer responses.

- Task-specific metrics — ROUGE/BLEU for summarisation, pass@k for code generation, citation accuracy for RAG pipelines. Use these when they map cleanly to what your users actually care about.

2. Reliability Metrics

Reliability is underrated. An agent that’s right 80% of the time but fails catastrophically on 5% of inputs is often worse than an agent that’s right 70% of the time consistently. Track:

- Format compliance rate — if you asked for JSON, did you get valid JSON? Track this separately from content quality.

- Refusal rate — how often does the model refuse a legitimate request? This matters a lot in production customer-facing agents.

- Hallucination rate — especially for RAG, measure how often the model asserts something not supported by the provided context.

- Consistency / variance — run the same prompt 10 times at temperature 0.7. How much do outputs vary? High variance is a problem for anything user-facing.

3. Cost Metrics

This is where most teams are flying blind. You need to track per-run cost broken down by prompt tokens and completion tokens, because the ratio matters. A prompt-heavy chain costs very differently from a completion-heavy one when you switch models.

At current pricing: GPT-4o runs roughly $2.50/1M input tokens and $10/1M output tokens. Claude Sonnet 3.5 is $3/1M input and $15/1M output. Claude Haiku 3.5 is $0.80/1M input and $4/1M output. For a simple classification task generating 50 tokens per run, Haiku costs roughly $0.0002 per call — you’d run 5,000 calls for a dollar. Swap that to GPT-4o and it’s still cheap, but if your eval suite runs 10,000 calls per deployment, model choice starts mattering.

4. Latency Metrics

Track p50, p90, and p99 latency — not just averages. A model that’s fast on average but has a p99 of 45 seconds will destroy any synchronous user experience. Also track time-to-first-token separately if you’re streaming, since it determines perceived responsiveness more than total latency does.

Building a Reproducible Evaluation Harness

Here’s a minimal but production-usable eval harness in Python. It supports multiple models, logs all metrics to a SQLite database for later comparison, and is designed to be extended with your own scorers.

import time

import json

import sqlite3

import hashlib

from dataclasses import dataclass, field

from typing import Callable, Optional

import anthropic

import openai

@dataclass

class EvalCase:

id: str

prompt: str

expected: Optional[str] = None

metadata: dict = field(default_factory=dict)

@dataclass

class EvalResult:

case_id: str

model: str

output: str

latency_ms: float

input_tokens: int

output_tokens: int

cost_usd: float

scores: dict = field(default_factory=dict)

# Model pricing per 1M tokens — update these regularly

MODEL_PRICING = {

"claude-haiku-3-5": {"input": 0.80, "output": 4.00},

"claude-sonnet-3-5": {"input": 3.00, "output": 15.00},

"gpt-4o": {"input": 2.50, "output": 10.00},

"gpt-4o-mini": {"input": 0.15, "output": 0.60},

}

def compute_cost(model: str, input_tokens: int, output_tokens: int) -> float:

pricing = MODEL_PRICING.get(model, {"input": 0, "output": 0})

return (input_tokens * pricing["input"] + output_tokens * pricing["output"]) / 1_000_000

def run_anthropic(prompt: str, model: str) -> tuple[str, int, int, float]:

client = anthropic.Anthropic()

start = time.monotonic()

response = client.messages.create(

model=model,

max_tokens=1024,

messages=[{"role": "user", "content": prompt}]

)

latency = (time.monotonic() - start) * 1000

output = response.content[0].text

return output, response.usage.input_tokens, response.usage.output_tokens, latency

def run_openai(prompt: str, model: str) -> tuple[str, int, int, float]:

client = openai.OpenAI()

start = time.monotonic()

response = client.chat.completions.create(

model=model,

messages=[{"role": "user", "content": prompt}]

)

latency = (time.monotonic() - start) * 1000

output = response.choices[0].message.content

return output, response.usage.prompt_tokens, response.usage.completion_tokens, latency

def exact_match_scorer(output: str, expected: str) -> float:

"""1.0 for exact match, 0.0 otherwise. Normalise for whitespace."""

return 1.0 if output.strip() == expected.strip() else 0.0

def json_valid_scorer(output: str, **kwargs) -> float:

"""Checks whether output is parseable JSON — model-agnostic."""

try:

json.loads(output)

return 1.0

except json.JSONDecodeError:

return 0.0

class EvalRunner:

def __init__(self, db_path: str = "evals.db"):

self.conn = sqlite3.connect(db_path)

self._init_db()

def _init_db(self):

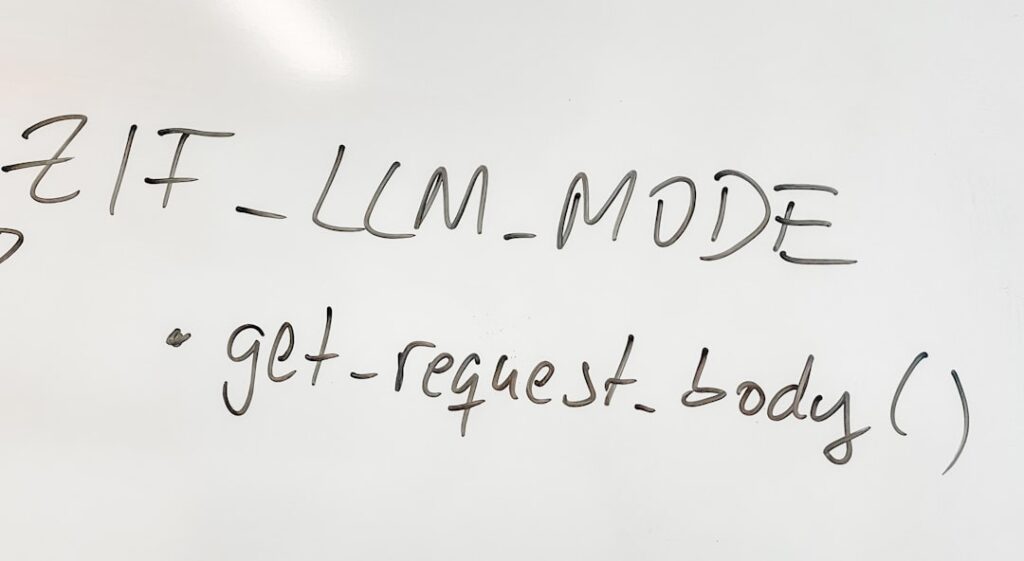

self.conn.execute("""

CREATE TABLE IF NOT EXISTS results (

id TEXT PRIMARY KEY,

case_id TEXT,

model TEXT,

output TEXT,

latency_ms REAL,

input_tokens INTEGER,

output_tokens INTEGER,

cost_usd REAL,

scores TEXT,

timestamp TEXT DEFAULT CURRENT_TIMESTAMP

)

""")

self.conn.commit()

def run(

self,

cases: list[EvalCase],

models: list[str],

scorers: list[Callable],

run_tag: str = "default"

) -> list[EvalResult]:

results = []

for case in cases:

for model in models:

# Route to the right API based on model name prefix

if model.startswith("claude"):

output, in_tok, out_tok, latency = run_anthropic(case.prompt, model)

else:

output, in_tok, out_tok, latency = run_openai(case.prompt, model)

cost = compute_cost(model, in_tok, out_tok)

scores = {}

for scorer in scorers:

score_name = scorer.__name__

scores[score_name] = scorer(

output=output,

expected=case.expected or ""

)

result = EvalResult(

case_id=case.id,

model=model,

output=output,

latency_ms=latency,

input_tokens=in_tok,

output_tokens=out_tok,

cost_usd=cost,

scores=scores

)

results.append(result)

# Persist to DB

row_id = hashlib.md5(f"{run_tag}{case.id}{model}".encode()).hexdigest()

self.conn.execute(

"INSERT OR REPLACE INTO results VALUES (?,?,?,?,?,?,?,?,?,CURRENT_TIMESTAMP)",

(row_id, case.id, model, output, latency, in_tok, out_tok, cost, json.dumps(scores))

)

self.conn.commit()

return results

This gives you a queryable history of every eval run. You can now ask: “Did my prompt change on Tuesday actually improve JSON compliance, and what did it cost to find out?”

A/B Testing Prompts and Models the Right Way

Running two prompts against the same 10 test cases and picking the winner is not A/B testing — it’s noise. Here’s what rigorous prompt A/B testing actually looks like.

Sample Size and Statistical Significance

For a binary metric (pass/fail, compliant/non-compliant), use a two-proportion z-test. If your baseline pass rate is 75% and you want to detect a 5-point improvement with 80% power at p=0.05, you need roughly 800 samples per variant. In practice, most teams don’t have 1,600 labeled examples — which is why they should start building eval datasets from day one, logging real production inputs with human-graded outputs.

For continuous metrics (scores 0–1), a paired t-test works well because you’re running the same inputs through both variants, and the pairing controls for input-level variance.

Controlling for Confounders

When comparing models, keep everything else constant: same system prompt, same temperature, same max tokens, same test cases, same time of day (models can behave differently under load). When comparing prompts, keep the model constant. Never change both at once — you won’t know what drove the difference.

LLM-as-Judge: Setup and Limitations

For open-ended quality assessment, LLM-as-judge scales where human annotation can’t. The setup that works best in practice:

def llm_judge_scorer(output: str, expected: str, task_description: str = "") -> float:

"""

Uses GPT-4o as a judge. Returns a 0.0–1.0 score.

Bias note: GPT-4o will slightly favour GPT-4o outputs. For cross-model

evals, use Claude as judge when testing OpenAI models and vice versa,

then average the two scores.

"""

client = openai.OpenAI()

judge_prompt = f"""You are an impartial evaluator. Score the following AI output on a scale of 1-5.

Task: {task_description}

Expected output (reference): {expected}

Actual output: {output}

Criteria:

- Factual accuracy (does it match the reference on key facts?)

- Completeness (does it cover all required elements?)

- Format compliance (does it follow the requested format?)

Respond ONLY with a JSON object: {{"score": <1-5>, "reasoning": "<one sentence>"}}"""

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": judge_prompt}],

response_format={"type": "json_object"}

)

result = json.loads(response.choices[0].message.content)

return (result["score"] - 1) / 4 # normalise to 0.0–1.0

Critical limitation: LLM judges are consistent, not accurate. They’ll reliably score outputs the same way each time, but that way may systematically differ from what your users actually value. Calibrate your judge against 50–100 human-graded examples before trusting it for production decisions.

Building Your Eval Dataset: The Unglamorous Part

Your eval harness is only as good as your test cases. The fastest path to a high-quality eval set:

- Log production inputs from day one. Every real input your agent handles is a candidate eval case. Store them with a sampling rate of 5–10% to keep volume manageable.

- Label failures first. When your agent fails in production, add that case to your eval set immediately with the correct output. Failure cases are more valuable than successes for catching regressions.

- Seed with synthetic adversarial cases. For each failure mode you care about (hallucination, format non-compliance, wrong entity extraction), generate synthetic cases that probe it specifically. Use Claude or GPT-4o to generate variants of real inputs.

- Separate your eval set from your prompt development set. Don’t optimise your prompt on the same cases you use to evaluate it. You’ll overfit. Use 80% for development, hold out 20% for final evaluation.

Tracking Improvements Over Time

The point of an eval harness is longitudinal tracking. Every time you change a prompt, swap a model, or update your retrieval pipeline, run your full eval suite and commit the results alongside the code change. This gives you a history that answers questions like: “When did our JSON compliance rate drop below 90%? Was it the prompt change on March 3rd or the model upgrade on March 7th?”

Tools worth knowing: LangSmith handles this well if you’re already in the LangChain ecosystem — it logs traces, supports human annotation, and has decent eval tooling. Braintrust is purpose-built for evals and is what I’d recommend for teams that want a managed solution without the LangChain coupling. PromptFoo is excellent for lightweight prompt comparison and runs locally. For teams already using n8n or Make for automation workflows, the SQLite-backed harness above integrates cleanly — you can trigger eval runs as part of a deployment workflow and write results to any backend you already have.

When to Use Which Approach

Here’s the honest breakdown by team type:

- Solo founder / early-stage: Skip managed eval tools for now. Use the harness above with 50–100 hand-labeled cases, run it before every prompt change, and store results in SQLite. Focus on one or two metrics that directly map to your product’s success criteria. This takes a day to set up and will save you weeks of debugging blind.

- Small team (2–8 engineers) shipping a production agent: Adopt Braintrust or LangSmith. The collaboration features and annotation UI pay off when more than one person is touching prompts. Budget roughly $50–200/month depending on volume.

- Enterprise / regulated environment: You need audit trails, human-in-the-loop annotation workflows, and the ability to run evals on-premise. Build on the open-source harness pattern and integrate with your existing data infrastructure. Don’t let a third-party SaaS hold your eval data.

Bottom line on LLM evaluation metrics: start simple, stay consistent, and measure what actually breaks your product in production. A small eval set you run on every change beats a sophisticated framework you never actually use. The teams that improve fastest aren’t the ones with the most elaborate tooling — they’re the ones who established a baseline and never shipped a change without measuring it.

Editorial note: API pricing, model capabilities, and tool features change frequently — always verify current details on the vendor’s website before building in production. Code examples are tested at time of writing; pin your dependency versions to avoid breaking changes. Some links in this article may be affiliate links — we may earn a commission if you sign up, at no extra cost to you.